Roadmap

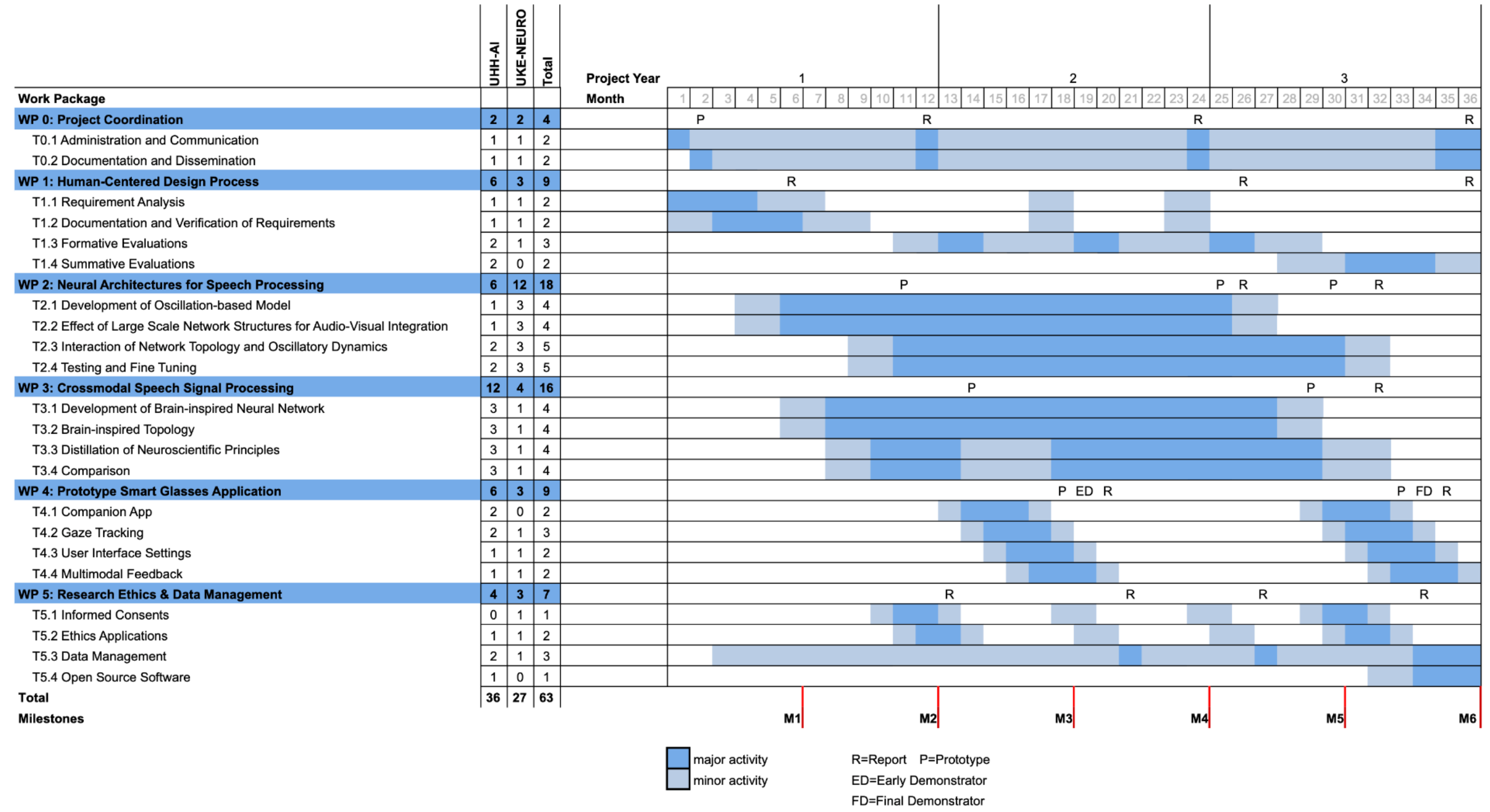

Key Milestones¶

| Month | Milestone |

|---|---|

| 6 | Requirement Analysis (Documentation and Verification) |

| 12 | First models of neural architectures for speech processing (Oscillatory and Topology) |

| 12 | First evaluations of bio-inspired neural networks |

| 14 | Companion App / VR Testbed |

| 18 | Early demonstrator of Smart Glasses |

| 24 | Neural architecture are integrated in crossmodal speech signal processing |

| 30 | Summative evaluation of Glasses |

| 36 | Final demonstrator and project finalization |

Initial Steps¶

- Create initial literature overview

- Keep it very broad, don't dive into papers

- Existing Glasses

- Libraries and frameworks

- Cocktail Party Problem

- Initial Hypothesis and Goals for dissertation

Phase 1: Entrypoint, Glasses with SE via Beamformer¶

- Data simulation and virtual test-bed

- Research material/experimental setup

- Comparability to other papers with similar goals

- UX vs experimental flexibility (tech should be quickly adaptable)

- Question: Embedded/on-device vs. external computer

- How to integrate

- microphone array

- speakers (in-ear vs in-frame)

- gyroscope

- eye tracking

- cameras (RGB, infrared, depth, …?)

- Research algorithmic setup

- Delay Beamformer, MVDR Beamformer

- Wiener Filter

- What other signal input can be used?

- Step 1: Build MVP, using head direction for beamforming

- Step 2: Integrate eye tracking, using eye focus for beamforming

Phase 2: Audio-only Neural SE¶

- Progress from purely algorithmic approaches to neural SE

- Use audio + view direction for SE

- SP lab research by Kristina Tesch

- Bio-inspired approaches like LinOSS (UKE)

Phase 3: Audio-Visual Fusion SE¶

- Augment glasses with outward facing cameras

- Use audio-visual fusion techniques for SE

- Which modality in noisy environments?

- Structured Light (e.g. RGB/infrared grid)

- Time-of-Flight (lidar)

- Which models?

- Mathematical vs bio-inspired